From gut feeling to fact-based decision-making - with AI?

What if your non-executive board and C-suite could utilise AI to replace gut feeling with objective deliberations as the basis for decision-making? How would that impact the strategic and financial health of your company?

Through my work as both advisor to boards and C-levels, as non-executive board member for several companies, and board chair of a company working with board recruitment, I have had several discussions on the use of AI tools, and in particular ChatGPT, in board proceedings.

Background and Perspective

First, a few words about myself and where my opinions stem from. Academically, I have two Master's degrees in biophysics and operational research (applied mathematics), respectively. Currently I am also working on a Bachelor's degree in law. Throughout my entire career of about 25 years, I have worked with data-fuelled strategy and business development.

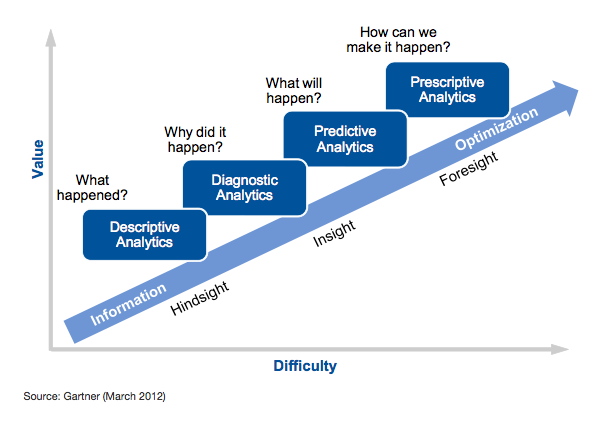

In 2012, Gartner published the illustration below, describing the levels of analytic approach to understanding the business operations

At this time, I was in an executive position in the insurance industry, and we spent a lot of time trying to explain to our peers how we could use analytical tools to approach decision-making through facts and objective arguments rather than gut-feeling. In 2012.

The Tools Have Been Here for Years

Since March 2012, digital transformation has brought about digital representations of both processes and assets at scale. Tools for extraction, synthesis, analysis, and presentation are abundant, and they have been abundant for more than a decade.

Recently, an experienced board director told me he would like to have an AI board member who could prepare the background information for him and tell him what the critical issues are. In addition, an AI board member could make up for those board members who were not prepared and had not read up on the preparatory material, and make sure that lack of preparation did not lead to gut-feeling being the key decisive factor in the room.

A few days ago, I had a similar conversation with another experienced board director who wants AI to help the members of the family-owned company to make more objective decisions based on facts, numbers, and risk assessments, in particular for investment decisions.

AI as an Enhancer, Not a Substitute

My reply to both of these was the same: yes, AI tools can most definitely be useful. For those who have read my article about The Three Buckets, you will quickly recognise that the main category of tools we are talking about here is personal productivity tools, such as ChatGPT, Copilot, and Claude.

Why, when that is the least valuable Bucket in terms of strategic importance? Because of accessibility. Chatbots are extremely intuitive and easy to use. They are remarkably capable of answering complex questions across domains, data types, and documents (although capabilities vary across tools). They are also dangerously good at confabulations and nonsense.

The key human skills to consider here are:

the ability to frame questions (aka prompts)

the capability to quickly recognise whether the output is valuable or nonsense.

However, both of these critical skills require a strong analytical mindset and solid understanding of the underlying material.

This is where the dragon bites its tail.

If your board members do not have the analytical mindset to ask the right questions in the first place, AI is not going to be helpful. On the contrary, it's likely to mislead the board into deeper thoughtlessness. AI cannot and should not be applied as a compensator, but as an enhancer. The AI toolbox can indeed be very powerful when used by people with the relevant expertise and appropriate skills. Make sure those skills are part of both your non-executive board and C-suite. If they are not, that’s where you should start your transition towards fact-based decision-making.

A Necessary Security Disclaimer

I have to add a disclaimer here: information security is essential. This entails balancing license levels and security settings with the confidential nature of the documentation. Before any board or C-suite starts using chatbots for proceedings, proper risk assessments should be performed, policies established, and licenses with appropriate security levels acquired.

If your board or leadership team would benefit from more constructive and fact-based discussions about AI and decision-making, I work with organisations through keynotes and advisory engagements to help boards and executives build the understanding needed to use AI responsibly and strategically.

Feel free to get in touch if you would like to continue the conversation.

About the Author

Elin Hauge is a keynote speaker, AI strategist, and trusted advisor to business leaders and boards. She specialises in helping organisations make sense of artificial intelligence beyond the hype, connecting technology to strategy, governance, and real-world value. With a multidisciplinary background in physics, mathematics, business, and law, Elin brings both analytical rigour and practical perspective. Her talks and advisory work empower leaders to ask better questions, make wiser decisions, and navigate AI with confidence.

Frequently Asked Questions:

-

Yes. AI tools can support preparation and analysis, but they work best as an enhancer for people with strong analytical skills and a solid understanding of the underlying material.

-

Chatbots can produce plausible but incorrect outputs. Without strong prompting and critical evaluation, they may mislead decision-makers into deeper thoughtlessness.

-

They need the ability to frame good questions and quickly assess whether an output is valuable or nonsense, grounded in a strong analytical mindset and understanding of the subject.

-

Before using chatbots in proceedings, boards and executives should perform risk assessments, establish policies, and ensure licenses and security settings match the confidentiality of their documentation.